What happens when your head hits a wall at 18 MPH? Nothing good, we can assure you, and yet those kinds of impacts are a daily occurrence in American football.

Sports medicine professionals and research organizations continue to raise awareness and educate athletes about the risk of concussive brain injuries and the limited protection of helmets.

With the mounting attention drawn to this issue, the NFL even created an engineering challenge to help drive the development of safer helmets.

Not long ago, when a client came to us with a concept for an improved helmet, we saw it as an exciting opportunity to make a positive impact on this serious and difficult to solve issue.

The concept was innovative: develop a helmet with a unique liquid damping system designed to minimize traumatic brain injuries in high-impact sports.

However, when we started, the project was full of questions and ambiguities. Very little was known in advance, except we were to refine the client’s existing helmet impact test ideas into something that performed consistently and could be manufactured in large quantities.

Ultimately, we would need to solve a number of complex and concurrent objectives under significant budget and time constraints:

From decades of experience helping clients solve complex engineering challenges, we knew that one of our best assets when starting this type of work is to have a flexible mindset. This is especially true when juggling competing objectives, multiple constraints, and ambitious concepts that have a fair amount of ambiguity.

So, we did what we do best: we embraced the ambiguity! We dove in and tackled what was possible each day, and then re-evaluated the next day. This iterative problem-solving approach helped us start small but move forward quickly by applying what we learned.

Testing was a major part of the project. We were constantly building different prototypes, sometimes several a day, and they all needed to be tested.

Let’s look at how testing unfolded and what we learned about developing the best solutions.

Our client was a small startup with limited financial resources. We couldn’t simply use a large, commercial impact tester costing thousands of dollars, otherwise the entire project budget might have been consumed.

We needed something relatively inexpensive, with the flexibility to be modified and reconfigured as the project evolved.

Plus, since the project schedule happened to occur at the height of the COVID work-from-home era, our test fixture also had to fit in the basement of our test engineer’s home!

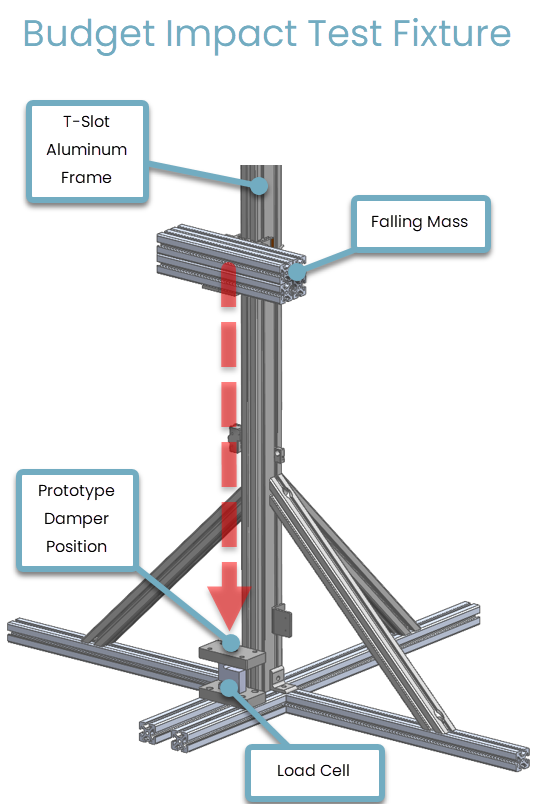

We chose to build a low-cost test fixture with T-slot aluminum framing because it’s easy to assemble and reconfigure. We ordered all the pieces pre-cut from an industrial supplier and assembled them in an afternoon.

The basic setup was quite simple:

The real challenge, though, wasn’t the mechanical design.

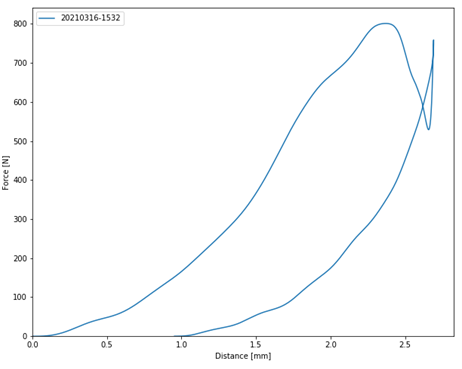

To evaluate each damper, we needed to make accurate measurements of force and displacement during the impact.

Because of the various project ambiguities, we decided to start out with a minimally viable test setup, and then upgrade it as we learned more.

The force measurements were straightforward:

We wanted the displacement measurements to be non-contact, because we didn’t want the measurement process to interfere with the impact.

After considering several alternatives we decided to experiment with a video-based method. We were already planning to capture high-speed videos, so perhaps we could also use those videos to measure distance?

We had a digital camera already on hand (a mid-range SLR) which was able to take videos at 960 frames per second. It wasn’t a true high-speed camera, but it was good enough for us to start with and didn’t cost anything.

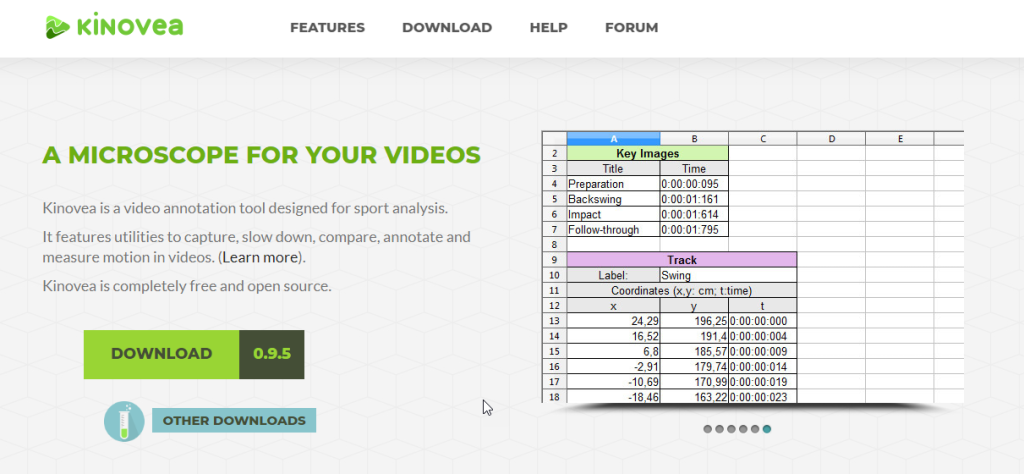

To extract distance information from the videos, we used the open-source Kinovea software.

This package was originally developed for sports analysis, but it works well for all kinds of video tracking applications.

The video-based method worked surprisingly well, considering it was something we cobbled together with little to no expense.

But as our helmet impact testing and design work increased, the shortcomings of the video method became more obvious.

Even under the best of circumstances, this process for data analysis took 10-15 minutes for each run. The resulting plots were only of medium quality, because of the interpolation and manual alignment steps.

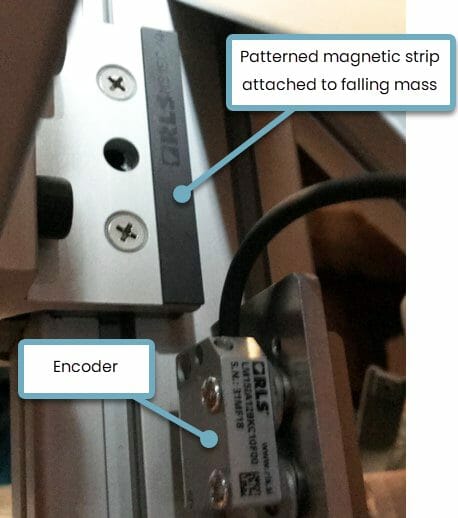

Our distance measurements improved dramatically when we replaced the video-based method with an off-the-shelf linear encoder.

Our solution came at a perfect time during the project, when we had made some major design decisions but needed to test dozens of different variations to optimize performance.

What previously took 15 minutes with several manual steps was now happening automatically in less than 5 seconds!

As we described earlier, ambiguity requires engineers to embrace the unknown in order to move quickly and discover the best solutions.

If we had approached this project with a more rigid product development process, we would not have had the freedom to make major, on-the-fly changes, applying what we learned at each stage about the product and the testing.

Similarly, it would have been foolish to try and build the “perfect” test setup on Day 1. Doing that would have likely led us to build the wrong thing, since we didn’t really understand what we truly needed.

Maintaining flexibility while working through each objective proved to be crucial for this helmet impact testing project.

Explore: Learn how Virginia Tech publishes the results of their research on head impacts to generate helmet ratings for football and other categories of sport

Looking for a partner in custom hardware testing?

Our engineers bring deep expertise in mechanical, electrical, and firmware test design. We build tailored protocols and fixtures that capture the right data for analysis, failure investigation, and performance verification.

Whether you need rapid turnaround or support through complex product development, AC’s test engineering team moves fast to deliver actionable insights and design recommendations.

Ready to talk through your testing challenge?